Starting with Kong

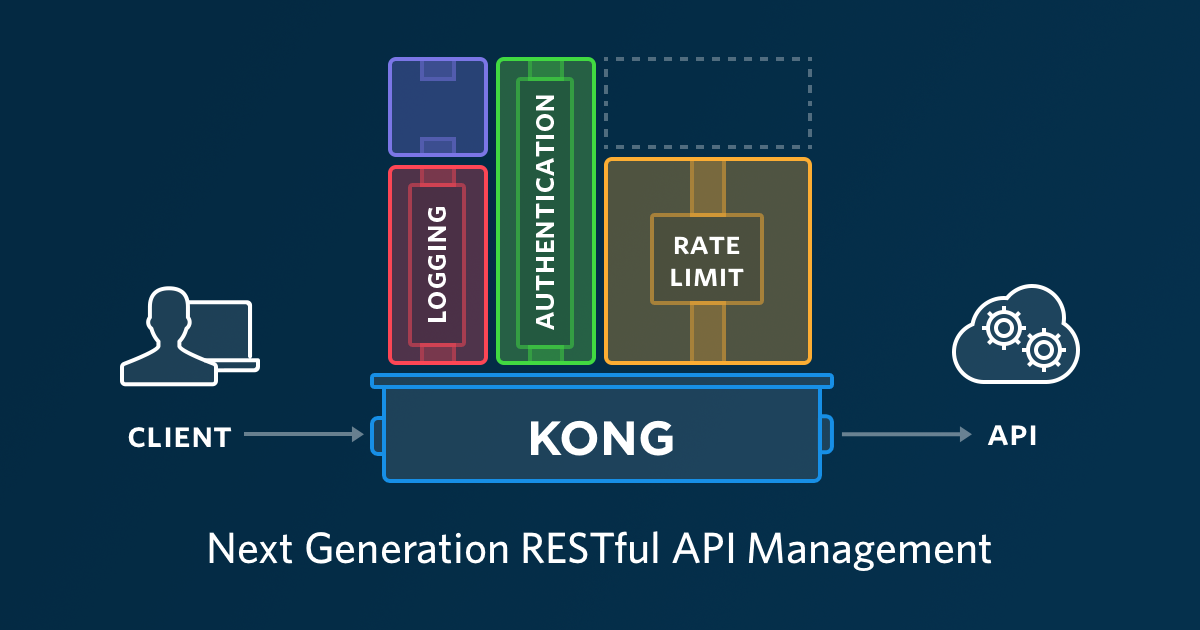

Microservices are cool, we all know their advantages, but they come at a cost of repeating a lot of code for security, filtering, caching and other common functionalities. To avoid that, we can put an API gateway in front of our components and leverage these features to that layer allowing us to build lighter services behind it.

Kong is one of those gateways, it is open source and plugin based. This plugin design allow us to add and remove features on this API gateway quite easy. Here we are going to dive into why we would need Kong, how it performs and how to create your first API.

Why an API Gateway?

Let's take as an example the authentication of our services, without an API Gateway we would need to implement an authentication layer in each of our apps making it very hard to maintain, with Kong we can abstract that to the edge layer, therefore making our services slimmer and more maintainable. Check the diagram below on how we remove layers from our apps and abstracting them to Kong.

And this is per API, so we can have Service A and Service B with one type of authentication and Service C with another, or add a new service with no authentication.

Another neat feature is that Kong can run with Cassandra as its backend DB, so it can scale horizontally without too much hassle.

With Kong we can grow from X microservices to X+10 and not worry about the edge layer of our app, just focus on the logic of our product which is what we want.

Performance

The design of Kong is very simple, it is NGINX at its base with a LUA application on top of it handling the logic. This simple design is what makes it perform pretty well and to check that, we've setup performance tests to check the difference between Kong and a vanilla NGINX. Here is the resulting graph of those tests:

In the graph the blue line is the requests per second directly to the app, then down NGINX and Kong are both performing quite similar, as you can see there is not much overhead when using Kong compared to plain NGINX. If you want to check the details of these tests, please head to this repo

So, after checking why Kong is good for us, let's quickly deploy Kong and put a basic auth layer on top of an API to see how easy it is to do it.

Deploy

We would need to deploy a cassandra instance first, for simplicity we would use Docker to deploy Cassandra and Kong:

docker run -d --name kong-database \

--restart always \

cassandra:3

Prepare the database:

docker run --rm \

--link kong-database:kong-database \

-e "KONG_DATABASE=cassandra" \

-e "KONG_PG_HOST=kong-database" \

-e "KONG_CASSANDRA_CONTACT_POINTS=kong-database" \

kong:0.11 kong migrations up

After cassandra is running and configured, run Kong:

docker run -d --name kong \

--link kong-database:kong-database \

--restart always \

-e "KONG_DATABASE=cassandra" \

-e "KONG_CASSANDRA_CONTACT_POINTS=kong-database" \

-p 8000:8000 \

-p 8443:8443 \

-p 8001:8001 \

-p 8444:8444 \

-p 7946:7946 \

-p 7946:7946/udp \

kong:0.11

Here we've exposed every port Kong has, although we are going to use only 8001 and 8000 for this post. Checkout what every port in Kong does here.

Note: Kong Docker container doesn't have yet logs in the stdout, but we can check when Kong is running by one of the following methods:

Checking response on port 8001:

curl -i http://127.0.0.1:8001

HTTP/1.1 200 OK

...

Or by checking the serf logs:

docker exec kong tail -f /usr/local/kong/logs/serf.log

...

==> Serf agent running!

...

Create an API

We will be using jsonplaceholder to create an API, jsonplaceholder is just a fake API to consume, you can use your own API if you want.

To create your first API in Kong run:

curl -i -X POST \

--url http://localhost:8001/apis/ \

--data 'name=jsonmock' \

--data 'uris=/myusers' \

--data 'upstream_url=https://jsonplaceholder.typicode.com/users'

In this command we have created a new entry in Kong which will redirect calls on the path /myusers to https://jsonplaceholder.typicode.com/users, the fields we've specified are:

- name: the name of this API, in this case we called it jsonmock

- uris: the uris where we are going to expose this API, this can be a list of paths as well

- upstream_url: where are we going to proxy the calls

Learn more about the admin API here

Now you can try this new API calling to localhost on the frontend API port:

curl -i -X GET http://localhost:8000/myusers/1

We should have the same response than this:

curl -i -X GET https://jsonplaceholder.typicode.com/users/1

Add plugins

Up to now we've created a proxy which is forwarding calls to jsonplaceholder, now let's add basic auth on top of it:

curl -i -X POST http://localhost:8001/apis/jsonmock/plugins \

--data "name=basic-auth" \

--data "config.hide_credentials=true"

In this command we are adding to jsonmock API the plugin basic-auth with the name parameter and we are configuring the plugin to not send the credential to the backend microservice with the config.hide_credential parameter.

We should get a 401 when trying to access /myusers/1:

curl -i -X GET http://localhost:8000/myusers/1

401 Unauthorized

...

Create a consumer:

Now we can create a consumer and credentials to start using this API with basic auth:

curl -i -X POST http://localhost:8001/consumers/ \

--data "username=consumer1"

A consumer in Kong is an extra abstraction of users allowing us to multiple users per consumer.

As an example lets imagine that this API is gonna be consumed by multiple companies, each of these companies will be an independent consumer, so they can have their own users.

Create Credentials:

Since we are going to use the basic-auth, we would need to create a user with its password:

curl -i -X POST http://localhost:8001/consumers/consumer1/basic-auth \

--data "username=charles" \

--data "password=ch@plin"

In this command we are adding to consumer1 a user with username “charles” and password “ch@plin”

Now we can try to access again our /myusers endpoint:

curl -i -X GET \

-u charles:ch@plin \

http://localhost:8000/myusers/1

HTTP/1.1 200 OK

...

As you can see now we get a 200 OK back. Here the authentication is being handled by Kong, and our app only cares about the logic of our API.

Kong can do way more than authentication, checkout it's plugins to see all the capabilities of it.